Summative testing is a structured evaluation used to measure how well a product performs against defined usability criteria—often near the end of a design cycle or immediately after release. In practice, it helps teams answer decision-grade questions such as: “Did we hit our usability targets?”, “Did the redesign improve performance?”, and “Are we better than the benchmark?”

For Singapore-based enterprises and public-sector teams, summative testing is especially valuable because it creates an auditable, comparable measure of experience quality across releases, vendors, and channels—and supports confident go/no-go and investment decisions. Summative evaluations are commonly used to assess a finished product against a benchmark such as a prior version or a competitor.

What Is Summative Testing?

In UX and product development, summative testing (often called summative usability testing or summative evaluation) measures the usability of a complete or near-complete product using predefined metrics. The intent is to quantify performance—typically to establish a benchmark, compare designs, or validate readiness for launch.

What summative testing is: Summative testing is closely related to how the term “summative” is used in education: summative assessments evaluate proficiency at the conclusion of an instructional period and are often graded or heavily weighted. The shared theme is the same: a summative approach “sums up” outcomes at a point where results must stand on their own.

What summative testing is not: it is not primarily an exploratory “let’s see what breaks” activity. That is the domain of formative testing, where teams iterate rapidly to uncover and fix issues before they become expensive to change. Formative evaluation steers the design; summative evaluation measures the outcome.

Summative vs Formative Testing

Both formative and summative testing improve product quality, but they do so in different ways:

- Formative testing is typically run during design and development to understand why users struggle and how to improve the design. It is iterative and often conducted with smaller samples (commonly 5–8 users).

- Summative testing is typically run later—near the end of development or right after launch—to measure usability through defined success metrics and compare performance to a benchmark (prior version, competitor, or internal target). It often uses larger samples (commonly 15–20 users).

A critical nuance: it is a misconception that “summative = quantitative” and “formative = qualitative.” Summative studies are often quantitative, but they can also be qualitative (for example, an expert review comparing your interface to a competitor can be summative if the research goal is comparative performance).

When to Use Summative Testing

Summative testing is most valuable when stakeholders need defensible evidence—measures that can be tracked, compared, and repeated.

Pre-launch validation (go/no-go)

Summative evaluation can be used as a final readiness check. In practice, organisations run a structured study to confirm critical tasks meet defined thresholds before launch.

Post-launch benchmarking and trend tracking

After shipping, a summative study can capture baseline metrics (success rates, time-on-task, error rates) and create a reference point for future releases. This supports performance tracking over time and helps link experience improvements to business outcomes.

Competitive benchmarking

Summative testing is frequently used to compare performance against competitor products or industry benchmarks, especially when leadership needs proof that a redesign or investment improved experience quality.

Regulated or high-risk products

In some industries (for example, medical devices), summative usability testing is treated as a formal validation activity with strict protocols, representative users, and a focus on identifying use errors that could cause serious harm.

What Summative Testing Measures

Summative usability testing is designed to measure usability in terms of effectiveness, efficiency, and satisfaction for representative users completing representative tasks.

In practice, summative testing typically defines usability requirements early, then evaluates whether the product meets those targets. Common task-based measures include:

- Task completion rate / pass-fail

- Time on task

- Error rates

- Overall user satisfaction

These metrics are especially powerful when they are:

- tied to high-value user journeys (e.g., onboarding, checkout, approval flows), and

- comparable to a benchmark such as the previous version or competitor performance.

Common Methods for Summative Testing

Summative testing is not a single method—it is a research intent. Common summative approaches include:

Benchmark usability testing

A benchmark study uses a fixed set of tasks and success metrics so results can be compared across releases. Summative studies often emphasize rigor and repeatability because the goal is measurement, not ideation.

Comparative studies

Summative evaluations frequently compare a redesign to a prior version by capturing metrics such as success rate and time-on-task, then using those results as the baseline for future improvements.

Expert review as a summative evaluation

A qualitative expert review can be summative if the goal is to assess overall strengths/weaknesses versus a benchmark (e.g., competitor, heuristic baseline), rather than to iterate on an early prototype.

Surveys and structured questionnaires

In education, summative evaluation often uses graded outputs at the end of a unit; similarly, product teams may use structured instruments to “sum up” perceived experience after task completion—ideally alongside behavioral metrics.

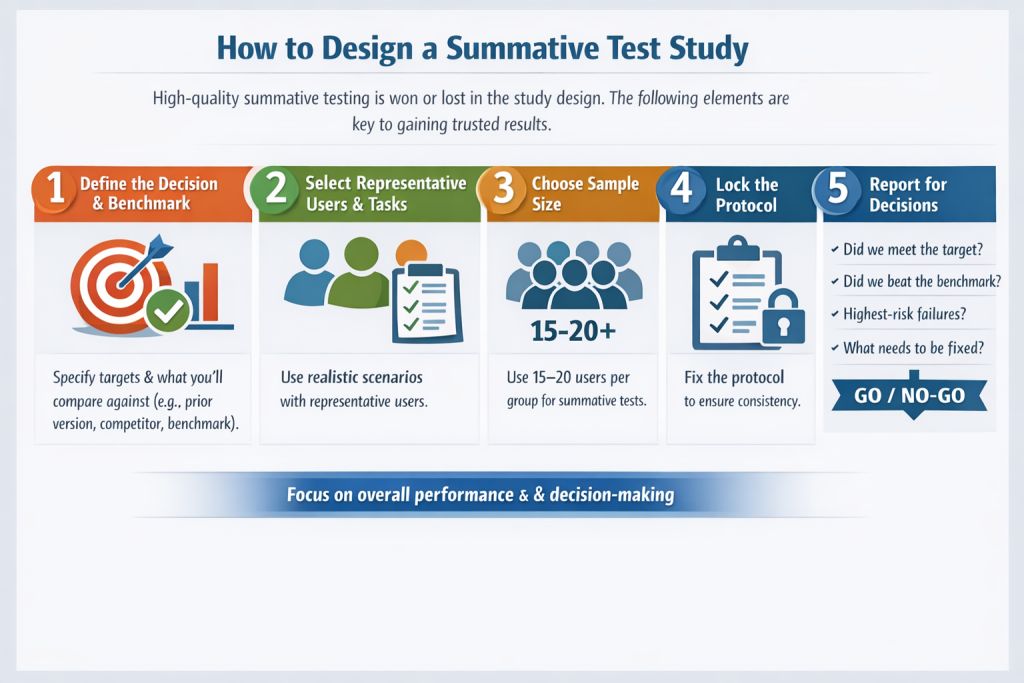

How to Design a Summative Test Study

High-quality summative testing is won or lost in the study design. The following elements are the usual determinants of whether results will be trusted by leadership.

1) Define the decision and the benchmark

Summative evaluations are typically comparative: against a prior version, competitor, or industry benchmark. Start by specifying what “good” looks like (targets) and what you will compare against.

2) Select representative users and realistic tasks

Summative testing requires representative users performing realistic scenarios. This is especially emphasised in formal, structured summative protocols.

3) Choose sample size appropriate to measurement goals

Many summative usability studies use larger samples than formative tests; a commonly cited range is 15 to 20 users for summative usability testing, versus 5 to 8 for formative.

In more formal validation contexts, guidance may require a minimum of 15 participants per distinct user group.

4) Lock the protocol

Because the objective is measurement, summative testing typically has less flexibility than formative testing. This increases comparability and reduces ambiguity in results.

5) Report for decisions, not just findings

Summative results should be easy to interpret:

- Did we meet the target?

- Did we improve versus the benchmark?

- What are the highest-risk failures (if any)?

- What must be fixed before release versus planned for the next iteration?

This framing aligns with the summative goal: describing overall performance and supporting decisions (including go/no-go).

Summative Testing Process at USER

At User Experience Researchers (USER), summative testing is designed to produce metrics leadership can trust, especially without losing the “why” behind the numbers.

Discovery and success metrics

We align stakeholders on the decision to be made (benchmarking, readiness, competitive comparison) and define usability requirements upfront—task-based targets such as success rate, time-on-task, error tolerance, and satisfaction thresholds.

Study design and recruitment

We specify representative user groups, realistic tasks, and sampling plans that match the measurement goal. For higher-stakes releases, this includes more structured protocols consistent with formal summative approaches.

Execution (remote or in-person)

Summative testing can be moderated or unmoderated. What matters most is consistency of tasks, measurement, and evidence capture so results remain comparable across time and products.

Analysis, benchmarking, and recommendations

We deliver:

- benchmark-ready metrics (so future studies compare apples to apples),

- interpretation against defined targets,

- prioritised fixes (especially for critical task failure and error patterns).

Summative evaluations often support tracking performance over time and can contribute to ROI narratives when tied to product outcomes.

Why Choose USER for Summative Testing

If you need summative testing that stands up to scrutiny from product leadership, compliance stakeholders, and delivery teams, USER is structured for decision-grade work:

- 15+ years of experience since 2010

- UX research–driven testing design, balancing metrics with diagnostic insight

- Enterprise and public-sector experience

- Singapore-based project leadership

- Regional engineering capability, supporting end-to-end build and improvement cycles

Case Examples of Summative Testing Projects

The following are anonymised, representative examples of common summative testing engagements.

Example 1: Enterprise employee portal redesign

- Challenge: Leadership needed proof the redesign improved completion of critical HR workflows.

- Approach: Summative benchmark study comparing old vs new flows on task success, time-on-task, and error rates.

- Outcome: Clear performance uplift on priority tasks and an agreed baseline for future quarterly tracking.

Example 2: Public-facing service journey

- Challenge: A launch deadline required a go/no-go readiness signal.

- Approach: Structured summative test of end-to-end tasks with predefined success thresholds and failure severity rules.

- Outcome: Go decision with targeted fixes for the highest-risk failure points prior to release.

Example 3: Regulated-style validation mindset for a high-risk workflow

- Challenge: Errors could lead to significant operational impact, requiring higher protocol rigor.

- Approach: Formalised summative protocol with representative user groups and realistic scenarios; emphasis on error identification and mitigation effectiveness.

- Outcome: Evidence-backed mitigation plan and measurable usability requirements for subsequent releases.

Common Questions About Summative Testing

Cost depends on the number of user groups, recruitment difficulty, task scope, and whether you need competitive benchmarking. The main cost driver is usually study complexity, not the tool used.

A typical cycle includes study design, recruitment, execution, analysis, and reporting. Timelines compress significantly if users are readily available and tasks are well-defined.

Formative tests are commonly run with 5–8 users, while summative tests often use 15–20 users to support measurement and benchmarking.

In more formal validation contexts, guidance may require at least 15 participants per distinct user group.

Yes. Summative testing can be moderated or unmoderated; the critical factor is the consistency of protocols, so metrics remain comparable.

This is common: even summative studies can uncover issues. The difference is how you treat them—capture and prioritise them for immediate remediation (if launch-risk) or the next iteration, while still using the study to establish a benchmark.

Getting Started With Summative Testing

If you are preparing for a launch, validating a redesign, or establishing a UX benchmark you can track over time, summative testing provides the metrics and confidence to make the right call.

To start efficiently, prepare:

- your key user journeys (top tasks),

- your target user segments,

- your success criteria (what “good” looks like),

- your comparison point (previous version, competitor, or internal target).

USER supports Singapore organisations with summative testing designed for executive decisions—benchmarked, repeatable, and tied to measurable outcomes.